Intro

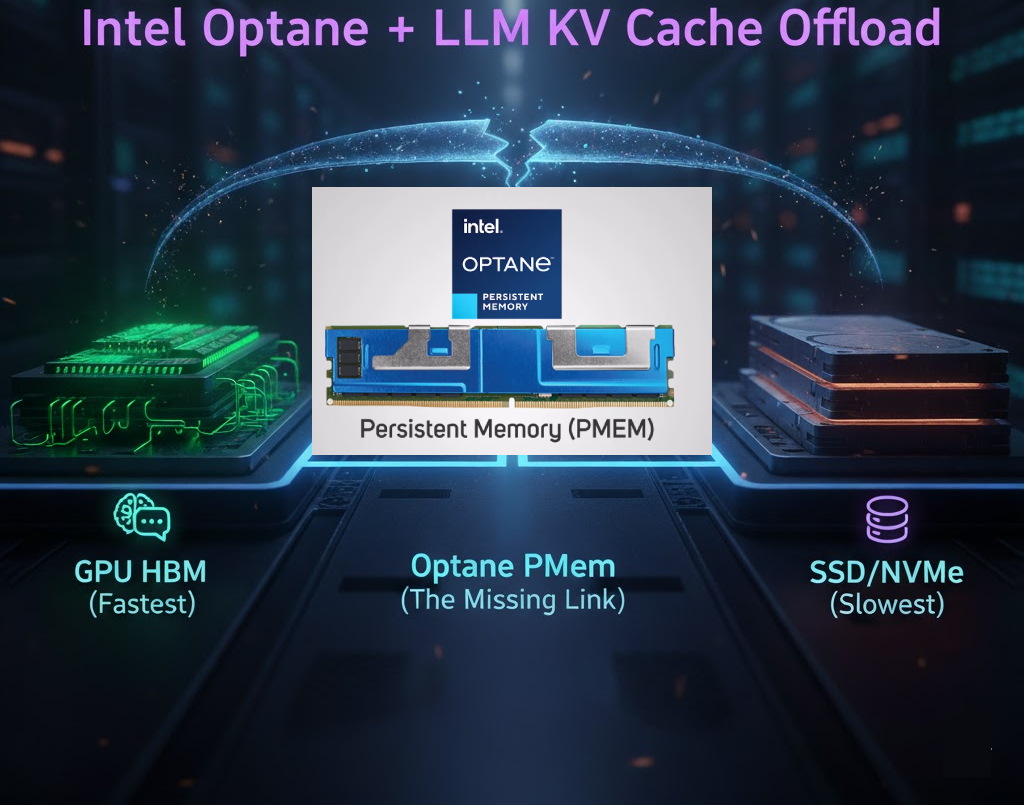

A few weeks ago I wrote about why Intel Optane Persistent Memory was the ideal technology for LLM KV-cache offloading with a near-DRAM latency, and natively non-volatile. In other words, it behaved like memory but survived reboots. I also explained why CXL wasn’t quite the performance equivalent, due to higher latency and non persistence.

But recently other big players like NVIDIA started to take a dent at this challenge. The hardware implementation of their solution is called Inference Context Memory Storage (ICMS) renamed CMX platform.

I. KV Cache: The New Inference Bottleneck

Every time an LLM processes a prompt, it saves its internal thinking process (Key & Value Tensors) in a super-fast scratchpad called the KV Cache. This speeds up first word appearance in your chat output (Time to First Token).

💡If you or your agent ask similar questions or use a RAG with repetitive prompts, the cached “context” is reused. Your LLM jumps straight to the answer by instantly generating the first word of its response.

The Bottleneck:

- GPU is Already Full: The GPU’s ultra-fast memory (HBM) is already consumed by all models weights.

- Cache Overload: On top of that, the AI’s working memory (KV Cache) explodes as context gets larger.

- Limits Scale: Larger context, more users means exponential memory pressure, making KV Cache the bottleneck.

🟥 The new inference wall isn’t compute. It’s Context (KV Cache).

KV Cache Offloading

The answer to the scaling challenges of KV Cache in LLM inference was KV Cache Offloading which is a technique of moving inactive, older parts of huge KV-Cache from expensive GPU into cheaper, larger storage like CPU RAM, SSD or S3. When data is needed again, it’s quickly swapped back onto the GPU.

The tiers consist of:

- G1 (GPU HBM): for hot, latency‑critical KV used in active generation

- G2 (System DRAM): for staging and buffering KV off HBM

- G3 (Local SSDs/Nvme): for warm KV that is reused over shorter timescales

- G4 (Shared Storage) including cache DBs(Redis),for cold artifacts, history, and durable/non critical results

The Offload Trap

The downside is the target medium itself, as the success of offloading relies entirely on its speed and persistence:

- DRAM (fast but expensive): great speed, but high cost & lack of persistence makes it impractical for large caches

- SSD/NVMe (cheap but slow): Great capacity, but higher Latency, hurts the Time-to-First-Token (TTFT) benefit.

II. Software solutions (LMCache)

LMCache is the primary vendor-neutral alternative for KV cache management. The open-source project, developed at the University of Chicago, provides hierarchical KV cache storage and sharing capabilities that work across multiple hardware platforms including NVIDIA GPUs, AMD MI300X accelerators, and Intel Gaudi 3 processors.

LMCache integrates with vLLM and SGLang inference engines to enable KV cache offloading to CPU RAM, local storage, and network storage like S3, Redis. The system supports cache offloading for prefix reuse across queries, compression (CacheGen), Cacheblend, and prefill-decode disaggregation for cross-engine cache transfer.

Platform & storage agnostic

Unlike NVIDIA’s CMX, which requires BlueField-4 DPUs and Spectrum-X Ethernet, LMCache operates over standard TCP/IP/RDMA networking and works with commodity storage infrastructure. It’s an approach prioritizing ecosystem compatibility over specialized hardware acceleration like NVIDIA’s BlueField.

III. Hardware Solutions

What Is NVIDIA CMX?

CMX (formerly Inference Context Memory Storage) is NVIDIA’s purpose-built hardware platform for storing and serving KV cache context at rack scale. It is powered by BlueField-4 DPUs and sits as a G3.5 tier in NVIDIA’s tiered KV cache architecture, bridging the gap between in-pod local SSDs (G3) and off-pod shared storage (G4) over RDMA networking.

Think of it as a network-attached SSD storage array that is AI-inference-aware. Instead of just storing, it understands context, orchestrating intelligent pre-staging so that relevant KV cache data is ready before the GPU asks for it.

Key building blocks:

- BlueField-4 DPUs — 64-core Arm processors managing NVMe context storage

- 800 Gb/s networking — RDMA over Spectrum-X Ethernet

- PCIe Gen6 x16 host interface

- DOCA storage frameworks — software layer orchestrating context pre-staging

- Up to 16 TB of context memory per GPU, and up to 150 TB per BlueField-4 DPU

NVIDIA CMX Data sheet:

⚡https://resources.nvidia.com/bluefield-4-dpu-datasheet

📺 Explore GTC related sessions.

What Was Intel Optane?

Intel Optane Persistent Memory, based on 3D XPoint technology, bridged the gap between volatile DRAM and slower storage. Think cheaper, higher-capacity RAM (up to 512 GB per stick) that persists over reboots. For LLM serving, that last part matters: with standard DRAM, a server reboot wipes the entire KV cache, not with Optane.

With Optane, an LLM server farm could recover near-instantly after maintenance or failure. No costly recomputation of every active user’s context. A resilience challenge the AI industry still hasn’t fully solved.

AMD: More Memory

AMD’s answer to the KV cache problem isn’t a new storage tier, it’s more memory. The AMD MI455X packs 432GB of HBM4 with 19.6 TB/s of bandwidth per GPU, large enough to keep model weights and a substantial KV cache on-accelerator without offloading. The trade-off: higher per-GPU cost, no cross-instance cache sharing, and it still relies on LMCache or vLLM’s PagedAttention when context spills over. Not a purpose-built context storage layer like CMX.

IV. Nvidia CMX vs. Intel Optane

KV cache is large, frequently accessed, and expensive to keep in GPU memory. What you really want is something that behaves close to memory, but persists like storage. This is why CMX and Intel Optane were picked for this comparison.

1. Architecture and Positioning

Optane Persistent Memory

- 3D XPoint memory in DDR4 DIMM slots

- Acts as a direct memory-tier extension (RAM sticks)

- Around

100to300nanosecondslatency - Byte addressable

- Native persistence (survives reboots)

NVIDIA CMX

- Flash based NVMe Context storage tier

- G3.5: Sits between local SSD tier and shared storage tier

- Managed by BlueField 4 DPUs

- Network attached and rack scale

- Disaggregated virtual storage

- limited to inferior GPU sparse attention mechanisms

2. Scale

Optane

- ~

512 GBper DIMM - Limited by memory slots

- A few terabytes per server at most

NVIDIA CMX

- Up to 16 TB of context memory per GPU

- Up to

150 TBbehind each BlueField 4 DPU - Designed for cluster level deployment

- leverages NIXL and Dynamo for advanced sharing across AI nodes

3. Performance Claims

Optane

- Near DRAM latency (

~100–300 ns) - Byte addressable

NVIDIA CMX

- ~Microsecond latency (DPU-orchestrated prestaging)

- Claims 5x higher tokens per second versus traditional storage

- Claims 5x better power efficiency

4. Persistence Model

Optane

- Native non volatile memory

- Survives reboots

- True persistent KV cache potential

INVIDIA CMX

- Context treated as reusable but non durable

- Intelligent prestaging and orchestration

- Persistence is operational, not architectural

5. Availability

- Intel Optane: discontinued ☹️

- NVIDIA ICMS: shipping H2 2026

CMX vs. Optane vs. CXL: Full Comparison

| Feature | Intel Optane PMem (3D XPoint) | CXL-Attached DRAM (Type 3) | NVIDIA CMX (BlueField-4) |

|---|---|---|---|

| Underlying Media | Proprietary Non-Volatile (3D XPoint) | Standard Volatile DRAM (DDR4/DDR5) | Flash-based NVMe (128GB LPDDR5 + DPU orchestration) |

| Persistence | Native Persistence (Data survives power-off) | Volatile (Data is lost on power-off) | Quasi-persistent (reusable context, non-durable) |

| Latency | Very Low (Approx. 100-300 ns) – Close to DRAM | Low-to-Moderate (Approx. 170-400 ns) – Higher than local DRAM | Moderate (~microseconds with 64-core Arm DPU prestaging) |

| Bandwidth | High (DDR4-equivalent, **20-30 GB/s per module**) | Very High (PCIe 5 Equivalent, up to 64GB/s) | Very High (800Gb/s network, PCIe Gen6 x16 host interface) |

| Protocol | Proprietary IMC/DDR Bus Slot | Open Standard (Runs over PCIe physical layer) | NVMe-oF + RDMA (Ethernet/InfiniBand, 800G capable) |

| Primary Value | Low-Latency Persistence + Capacity | Massive Capacity Expansion + Memory Pooling | Massive Scale (16TB/GPU, 150TB/DPU) + AI-native orchestration |

The CMX Catch

CMX is tightly coupled to the NVIDIA ecosystem. If your stack is AMD based, you are not leveraging it. The ideal architecture would be a CPU linked, vendor agnostic context tier usable across heterogeneous GPU environments.

That still does not exist.

Takeaways

Optane was technically ahead of its time. It could have solved the KV cache problem at the memory tier, before LLM workloads made that problem mainstream. But it was trapped inside Intel’s shrinking ecosystem. NVIDIA CMX takes the same core insight: Fast, persistent like storage for ephemeral inference context. The difference is execution at scale. Instead of extending the CPU memory bus, NVIDIA extends the GPU cluster fabric with DPUs, RDMA, Spectrum X Ethernet, and DOCA storage frameworks. Architecturally, it is less elegant than Optane. But Operationally more scalable.

The irony? Intel killed Optane in 2022, just months before the LLM boom that would’ve made it essential.

The next frontier is not just smarter models. It is smarter memory.

Run AI Your Way — In Your Cloud

Want full control over your AI backend? The CloudThrill VLLM Private Inference POC is still open — but not forever.

📢 Secure your spot (only a few left), 𝗔𝗽𝗽𝗹𝘆 𝗻𝗼𝘄!

Run AI assistants, RAG, or internal models on an AI backend 𝗽𝗿𝗶𝘃𝗮𝘁𝗲𝗹𝘆 𝗶𝗻 𝘆𝗼𝘂𝗿 𝗰𝗹𝗼𝘂𝗱 –

✅ No external APIs

✅ No vendor lock-in

✅ Total data control

𝗬𝗼𝘂𝗿 𝗶𝗻𝗳𝗿𝗮. 𝗬𝗼𝘂𝗿 𝗺𝗼𝗱𝗲𝗹𝘀. 𝗬𝗼𝘂𝗿 𝗿𝘂𝗹𝗲𝘀…

🙋🏻♀️If you like this content please subscribe to our blog newsletter ❤️.